2015

Woodring, Jonathan; Petersen, Mark; Schmeiber, Andre; Patchett, John; Ahrens, James; Hagen, Hans

In Situ Eddy Analysis in a High-Resolution Ocean Climate Model Proceedings Article

In: IEEE, 2015, (LA-UR-pending).

Abstract | Links | BibTeX | Tags: climate modeling, collaborative development, feature analysis, feature extraction, high performance computing, In situ analysis, mesoscale eddies, ocean modeling, online analysis, revision control, simulation, software engineering, supercomputing

@inproceedings{Woodring2015,

title = {In Situ Eddy Analysis in a High-Resolution Ocean Climate Model},

author = {Jonathan Woodring and Mark Petersen and Andre Schmeiber and John Patchett and James Ahrens and Hans Hagen},

url = {http://ieeexplore.ieee.org/document/7192723/},

year = {2015},

date = {2015-01-01},

publisher = {IEEE},

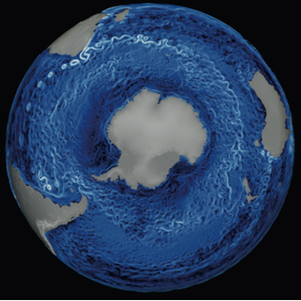

abstract = {An eddy is a feature associated with a rotating body of fluid, surrounded by a ring of shearing fluid. In the ocean, eddies are 10 to 150 km in diameter, are spawned by boundary currents and baroclinic instabilities, may live for hundreds of days, and travel for hundreds of kilometers. Eddies are important in climate studies because they transport heat, salt, and nutrients through the world’s oceans and are vessels of biological productivity. The study of eddies in global ocean-climate models requires large-scale, high-resolution simulations. This poses a problem for feasible (timely) eddy analysis, as ocean simulations generate massive amounts of data, causing a bottleneck for traditional analysis workflows. To enable eddy studies, we have developed an in situ workflow for the quantitative and qualitative analysis of MPAS-Ocean, a high-resolution ocean climate model, in collaboration with the ocean model research and development process. Planned eddy analysis at high spatial and temporal resolutions will not be possible with a post- processing workflow due to various constraints, such as storage size and I/O time, but the in situ workflow enables it and scales well to ten-thousand processing elements.},

note = {LA-UR-pending},

keywords = {climate modeling, collaborative development, feature analysis, feature extraction, high performance computing, In situ analysis, mesoscale eddies, ocean modeling, online analysis, revision control, simulation, software engineering, supercomputing},

pubstate = {published},

tppubtype = {inproceedings}

}

2011

Woodring, Jonathan; Mniszewski, Susan; Brislawn, Christopher M.; DeMarle, David; Ahrens, James

Revisiting wavelet compression for large-scale climate data using JPEG 2000 and ensuring data precision Proceedings Article

In: Large Data Analysis and Visualization (LDAV), 2011 IEEE Symposium on, pp. 31–38, IEEE 2011, (LA-UR-pending).

Abstract | Links | BibTeX | Tags: climate modeling, coding and information theory, data compaction and compression, JPEG 2000, Wavelet

@inproceedings{woodring2011revisiting,

title = {Revisiting wavelet compression for large-scale climate data using JPEG 2000 and ensuring data precision},

author = {Jonathan Woodring and Susan Mniszewski and Christopher M. Brislawn and David DeMarle and James Ahrens},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/RevisitingWaveletComp.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Large Data Analysis and Visualization (LDAV), 2011 IEEE Symposium on},

pages = {31--38},

organization = {IEEE},

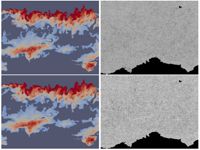

abstract = {We revisit wavelet compression by using a standards-based method to reduce large-scale data sizes for production scientific computing. Many of the bottlenecks in visualization and analysis come from limited bandwidth in data movement, from storage to networks. The majority of the processing time for visualization and analysis is spent reading or writing large-scale data or moving data from a remote site in a distance scenario. Using wavelet compression in JPEG 2000, we provide a mechanism to vary data transfer time versus data quality, so that a domain expert can improve data transfer time while quantifying compression effects on their data. By using a standards-based method, we are able to provide scientists with the state-of-the-art wavelet compression from the signal processing and data compression community, suitable for use in a production computing environment. To quantify compression effects, we focus on measuring bit rate versus maximum error as a quality metric to provide precision guarantees for scientific analysis on remotely compressed POP (Parallel Ocean Program) data.},

note = {LA-UR-pending},

keywords = {climate modeling, coding and information theory, data compaction and compression, JPEG 2000, Wavelet},

pubstate = {published},

tppubtype = {inproceedings}

}

Woodring, Jonathan; Petersen, Mark; Schmeiber, Andre; Patchett, John; Ahrens, James; Hagen, Hans

In Situ Eddy Analysis in a High-Resolution Ocean Climate Model Proceedings Article

In: IEEE, 2015, (LA-UR-pending).

@inproceedings{Woodring2015,

title = {In Situ Eddy Analysis in a High-Resolution Ocean Climate Model},

author = {Jonathan Woodring and Mark Petersen and Andre Schmeiber and John Patchett and James Ahrens and Hans Hagen},

url = {http://ieeexplore.ieee.org/document/7192723/},

year = {2015},

date = {2015-01-01},

publisher = {IEEE},

abstract = {An eddy is a feature associated with a rotating body of fluid, surrounded by a ring of shearing fluid. In the ocean, eddies are 10 to 150 km in diameter, are spawned by boundary currents and baroclinic instabilities, may live for hundreds of days, and travel for hundreds of kilometers. Eddies are important in climate studies because they transport heat, salt, and nutrients through the world’s oceans and are vessels of biological productivity. The study of eddies in global ocean-climate models requires large-scale, high-resolution simulations. This poses a problem for feasible (timely) eddy analysis, as ocean simulations generate massive amounts of data, causing a bottleneck for traditional analysis workflows. To enable eddy studies, we have developed an in situ workflow for the quantitative and qualitative analysis of MPAS-Ocean, a high-resolution ocean climate model, in collaboration with the ocean model research and development process. Planned eddy analysis at high spatial and temporal resolutions will not be possible with a post- processing workflow due to various constraints, such as storage size and I/O time, but the in situ workflow enables it and scales well to ten-thousand processing elements.},

note = {LA-UR-pending},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Woodring, Jonathan; Mniszewski, Susan; Brislawn, Christopher M.; DeMarle, David; Ahrens, James

Revisiting wavelet compression for large-scale climate data using JPEG 2000 and ensuring data precision Proceedings Article

In: Large Data Analysis and Visualization (LDAV), 2011 IEEE Symposium on, pp. 31–38, IEEE 2011, (LA-UR-pending).

@inproceedings{woodring2011revisiting,

title = {Revisiting wavelet compression for large-scale climate data using JPEG 2000 and ensuring data precision},

author = {Jonathan Woodring and Susan Mniszewski and Christopher M. Brislawn and David DeMarle and James Ahrens},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/RevisitingWaveletComp.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Large Data Analysis and Visualization (LDAV), 2011 IEEE Symposium on},

pages = {31--38},

organization = {IEEE},

abstract = {We revisit wavelet compression by using a standards-based method to reduce large-scale data sizes for production scientific computing. Many of the bottlenecks in visualization and analysis come from limited bandwidth in data movement, from storage to networks. The majority of the processing time for visualization and analysis is spent reading or writing large-scale data or moving data from a remote site in a distance scenario. Using wavelet compression in JPEG 2000, we provide a mechanism to vary data transfer time versus data quality, so that a domain expert can improve data transfer time while quantifying compression effects on their data. By using a standards-based method, we are able to provide scientists with the state-of-the-art wavelet compression from the signal processing and data compression community, suitable for use in a production computing environment. To quantify compression effects, we focus on measuring bit rate versus maximum error as a quality metric to provide precision guarantees for scientific analysis on remotely compressed POP (Parallel Ocean Program) data.},

note = {LA-UR-pending},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}