2007

Ahrens, James; Desai, Nehal; McCormick, Patrick; Martin, Ken; Woodring, Jonathan

A modular extensible visualization system architecture for culled prioritized data streaming Proceedings Article

In: Electronic Imaging 2007, pp. 64950I–64950I, International Society for Optics and Photonics 2007, (LA-UR-07-5141).

Abstract | Links | BibTeX | Tags: data streaming, visualization

@inproceedings{ahrens2007modular,

title = {A modular extensible visualization system architecture for culled prioritized data streaming},

author = {James Ahrens and Nehal Desai and Patrick McCormick and Ken Martin and Jonathan Woodring},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/AModularExtensibleVisualizationSystemArchitectureForCulledPrioritizedDataStreaming.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Electronic Imaging 2007},

pages = {64950I--64950I},

organization = {International Society for Optics and Photonics},

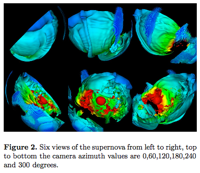

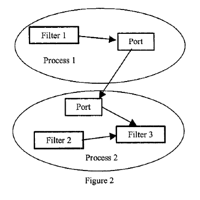

abstract = {Massive dataset sizes can make visualization difficult or impossible. One solution to this problem is to divide a dataset into smaller pieces and then stream these pieces through memory, running algorithms on each piece. This paper presents a modular data-flow visualization system architecture for culling and prioritized data streaming. This streaming architecture improves program performance both by discarding pieces of the input dataset that are not required to complete the visualization, and by prioritizing the ones that are. The system supports a wide variety of culling and prioritization techniques, including those based on data value, spatial constraints, and occlusion tests. Prioritization ensures that pieces are processed and displayed progressively based on an estimate of their contribution to the resulting image. Using prioritized ordering, the architecture presents a progressively rendered result in a significantly shorter time than a standard visualization architecture. The design is modular, such that each module in a user-defined data-flow visualization program can cull pieces as well as contribute to the final processing order of pieces. In addition, the design is extensible, providing an interface for the addition of user-defined culling and prioritization techniques to new or existing visualization modules.},

note = {LA-UR-07-5141},

keywords = {data streaming, visualization},

pubstate = {published},

tppubtype = {inproceedings}

}

2001

Ahrens, James; Brislawn, Kristi; Martin, Ken; Geveci, Berk; Law, Charles; Papka, Michael

Large-scale data visualization using parallel data streaming Journal Article

In: Computer Graphics and Applications, IEEE, vol. 21, no. 4, pp. 34–41, 2001, (LA-UR-01-0970).

Abstract | Links | BibTeX | Tags: data streaming, LargeScaleVisualization, MPI, ParallelVisualization, VTK

@article{ahrens2001large,

title = {Large-scale data visualization using parallel data streaming},

author = {James Ahrens and Kristi Brislawn and Ken Martin and Berk Geveci and Charles Law and Michael Papka},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/LargeScaleDataVisualizationUsingParallelDataStreaming.pdf},

year = {2001},

date = {2001-01-01},

journal = {Computer Graphics and Applications, IEEE},

volume = {21},

number = {4},

pages = {34--41},

publisher = {IEEE},

abstract = {Effective large-scale data visualization remains a significant and important challenge with analysis codes already producing terabyte results on clusters with thousands of processors. Frequently the analysis codes produce distributed data and consume a significant portion of the available memory per node. This paper presents an architectural approach to handling these visualization problems based on mixed dataset topology parallel data streaming. This enables visualizations on a parallel cluster that would normally require more storage/memory than is available while at the same time achieving high code reuse. Results from a variety of hardware and visualization configurations are discussed with data sizes ranging near to a petabyte.},

note = {LA-UR-01-0970},

keywords = {data streaming, LargeScaleVisualization, MPI, ParallelVisualization, VTK},

pubstate = {published},

tppubtype = {article}

}

Ahrens, James; Desai, Nehal; McCormick, Patrick; Martin, Ken; Woodring, Jonathan

A modular extensible visualization system architecture for culled prioritized data streaming Proceedings Article

In: Electronic Imaging 2007, pp. 64950I–64950I, International Society for Optics and Photonics 2007, (LA-UR-07-5141).

@inproceedings{ahrens2007modular,

title = {A modular extensible visualization system architecture for culled prioritized data streaming},

author = {James Ahrens and Nehal Desai and Patrick McCormick and Ken Martin and Jonathan Woodring},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/AModularExtensibleVisualizationSystemArchitectureForCulledPrioritizedDataStreaming.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Electronic Imaging 2007},

pages = {64950I--64950I},

organization = {International Society for Optics and Photonics},

abstract = {Massive dataset sizes can make visualization difficult or impossible. One solution to this problem is to divide a dataset into smaller pieces and then stream these pieces through memory, running algorithms on each piece. This paper presents a modular data-flow visualization system architecture for culling and prioritized data streaming. This streaming architecture improves program performance both by discarding pieces of the input dataset that are not required to complete the visualization, and by prioritizing the ones that are. The system supports a wide variety of culling and prioritization techniques, including those based on data value, spatial constraints, and occlusion tests. Prioritization ensures that pieces are processed and displayed progressively based on an estimate of their contribution to the resulting image. Using prioritized ordering, the architecture presents a progressively rendered result in a significantly shorter time than a standard visualization architecture. The design is modular, such that each module in a user-defined data-flow visualization program can cull pieces as well as contribute to the final processing order of pieces. In addition, the design is extensible, providing an interface for the addition of user-defined culling and prioritization techniques to new or existing visualization modules.},

note = {LA-UR-07-5141},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Ahrens, James; Brislawn, Kristi; Martin, Ken; Geveci, Berk; Law, Charles; Papka, Michael

Large-scale data visualization using parallel data streaming Journal Article

In: Computer Graphics and Applications, IEEE, vol. 21, no. 4, pp. 34–41, 2001, (LA-UR-01-0970).

@article{ahrens2001large,

title = {Large-scale data visualization using parallel data streaming},

author = {James Ahrens and Kristi Brislawn and Ken Martin and Berk Geveci and Charles Law and Michael Papka},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/LargeScaleDataVisualizationUsingParallelDataStreaming.pdf},

year = {2001},

date = {2001-01-01},

journal = {Computer Graphics and Applications, IEEE},

volume = {21},

number = {4},

pages = {34--41},

publisher = {IEEE},

abstract = {Effective large-scale data visualization remains a significant and important challenge with analysis codes already producing terabyte results on clusters with thousands of processors. Frequently the analysis codes produce distributed data and consume a significant portion of the available memory per node. This paper presents an architectural approach to handling these visualization problems based on mixed dataset topology parallel data streaming. This enables visualizations on a parallel cluster that would normally require more storage/memory than is available while at the same time achieving high code reuse. Results from a variety of hardware and visualization configurations are discussed with data sizes ranging near to a petabyte.},

note = {LA-UR-01-0970},

keywords = {},

pubstate = {published},

tppubtype = {article}

}