2014

Nouanesengsy, Boonthanome; Woodring, Jonathan; Patchett, John; Myers, Kary; Ahrens, James

ADR visualization: A generalized framework for ranking large-scale scientific data using Analysis-Driven Refinement Proceedings Article

In: Large Data Analysis and Visualization (LDAV), 2014 IEEE 4th Symposium on, pp. 43–50, IEEE 2014, (LA-UR-pending).

Abstract | Links | BibTeX | Tags: adaptive mesh refinement, ADR, Analysis-Driven Refinement, big data, data triage, focus+context, hardware architecture, large-scale data, parallel processing, picture/image generation, prioritization, scientific data, viewing algorithms

@inproceedings{nouanesengsy2014adr,

title = {ADR visualization: A generalized framework for ranking large-scale scientific data using Analysis-Driven Refinement},

author = {Boonthanome Nouanesengsy and Jonathan Woodring and John Patchett and Kary Myers and James Ahrens},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/ADRVisualization.pdf},

year = {2014},

date = {2014-01-01},

booktitle = {Large Data Analysis and Visualization (LDAV), 2014 IEEE 4th Symposium on},

pages = {43--50},

organization = {IEEE},

abstract = {Prioritization of data is necessary for managing large-scale scien- tific data, as the scale of the data implies that there are only enough resources available to process a limited subset of the data. For ex- ample, data prioritization is used during in situ triage to scale with bandwidth bottlenecks, and used during focus+context visualiza- tion to save time during analysis by guiding the user to impor- tant information. In this paper, we present ADR visualization, a generalized analysis framework for ranking large-scale data using Analysis-Driven Refinement (ADR), which is inspired by Adaptive Mesh Refinement (AMR). A large-scale data set is partitioned in space, time, and variable, using user-defined importance measure- ments for prioritization. This process creates a prioritization tree over the data set. Using this tree, selection methods can generate sparse data products for analysis, such as focus+context visualiza- tions or sparse data sets.},

note = {LA-UR-pending},

keywords = {adaptive mesh refinement, ADR, Analysis-Driven Refinement, big data, data triage, focus+context, hardware architecture, large-scale data, parallel processing, picture/image generation, prioritization, scientific data, viewing algorithms},

pubstate = {published},

tppubtype = {inproceedings}

}

Su, Yu; Agrawal, Gagan; Woodring, Jonathan; Myers, Kary; Wendelberger, Joanne; Ahrens, James

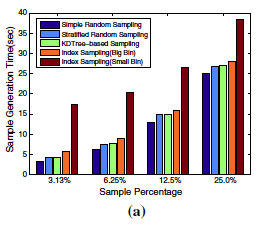

Effective and efficient data sampling using bitmap indices Journal Article

In: Cluster Computing, pp. 1-20, 2014, ISSN: 1386-7857, (LA-UR-pending).

Abstract | Links | BibTeX | Tags: big data, Bitmap indexing, data sampling, Multi-resolution, parallel processing

@article{,

title = {Effective and efficient data sampling using bitmap indices},

author = {Yu Su and Gagan Agrawal and Jonathan Woodring and Kary Myers and Joanne Wendelberger and James Ahrens},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/EffectiveAndEfficientDataSamplingUsingBitmapIndeces.pdf},

doi = {10.1007/s10586-014-0360-5},

issn = {1386-7857},

year = {2014},

date = {2014-01-01},

journal = {Cluster Computing},

pages = {1-20},

publisher = {Springer US},

abstract = {With growing computational capabilities of parallel machines, scientific simulations are being performed at finer spatial and temporal scales, leading to a data explosion. The growing sizes are making it extremely hard to store, manage, disseminate, analyze, and visualize these datasets, especially as neither the memory capacity of parallel machines, memory access speeds, nor disk bandwidths are increasing at the same rate as the computing power. Sampling can be an effective technique to address the above challenges, but it is extremely important to ensure that dataset characteristics are preserved, and the loss of accuracy is within acceptable levels. In this paper, we address the data explosion problems by developing a novel sampling approach, and implementing it in a flexible system that supports server-side sampling and data subsetting.We observe that to allowsubsetting over scientific datasets, data repositories are likely to use an indexing technique. Among these techniques, we see that bitmap indexing can not only effectively support subsetting over scientific datasets, but can also help create samples that preserve both value and spatial distributions over scientific datasets. We have developed algorithms for using bitmap indices to sample datasets. We have also shown how only a small amount of additional metadata stored with bitvectors can help assess loss of accuracy with a particular subsampling level. Some of the other properties of this novel approach include: (1) sampling can be flexibly applied to a subset of the original dataset, which may be specified using a valuebased and/or a dimension-based subsetting predicate, and (2) no data reorganization is needed, once bitmap indices have been generated. We have extensively evaluated our method with different types of datasets and applications, and demonstrated the effectiveness of our approach.},

note = {LA-UR-pending},

keywords = {big data, Bitmap indexing, data sampling, Multi-resolution, parallel processing},

pubstate = {published},

tppubtype = {article}

}

Nouanesengsy, Boonthanome; Woodring, Jonathan; Patchett, John; Myers, Kary; Ahrens, James

ADR visualization: A generalized framework for ranking large-scale scientific data using Analysis-Driven Refinement Proceedings Article

In: Large Data Analysis and Visualization (LDAV), 2014 IEEE 4th Symposium on, pp. 43–50, IEEE 2014, (LA-UR-pending).

@inproceedings{nouanesengsy2014adr,

title = {ADR visualization: A generalized framework for ranking large-scale scientific data using Analysis-Driven Refinement},

author = {Boonthanome Nouanesengsy and Jonathan Woodring and John Patchett and Kary Myers and James Ahrens},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/ADRVisualization.pdf},

year = {2014},

date = {2014-01-01},

booktitle = {Large Data Analysis and Visualization (LDAV), 2014 IEEE 4th Symposium on},

pages = {43--50},

organization = {IEEE},

abstract = {Prioritization of data is necessary for managing large-scale scien- tific data, as the scale of the data implies that there are only enough resources available to process a limited subset of the data. For ex- ample, data prioritization is used during in situ triage to scale with bandwidth bottlenecks, and used during focus+context visualiza- tion to save time during analysis by guiding the user to impor- tant information. In this paper, we present ADR visualization, a generalized analysis framework for ranking large-scale data using Analysis-Driven Refinement (ADR), which is inspired by Adaptive Mesh Refinement (AMR). A large-scale data set is partitioned in space, time, and variable, using user-defined importance measure- ments for prioritization. This process creates a prioritization tree over the data set. Using this tree, selection methods can generate sparse data products for analysis, such as focus+context visualiza- tions or sparse data sets.},

note = {LA-UR-pending},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Su, Yu; Agrawal, Gagan; Woodring, Jonathan; Myers, Kary; Wendelberger, Joanne; Ahrens, James

Effective and efficient data sampling using bitmap indices Journal Article

In: Cluster Computing, pp. 1-20, 2014, ISSN: 1386-7857, (LA-UR-pending).

@article{,

title = {Effective and efficient data sampling using bitmap indices},

author = {Yu Su and Gagan Agrawal and Jonathan Woodring and Kary Myers and Joanne Wendelberger and James Ahrens},

url = {http://datascience.dsscale.org/wp-content/uploads/2016/06/EffectiveAndEfficientDataSamplingUsingBitmapIndeces.pdf},

doi = {10.1007/s10586-014-0360-5},

issn = {1386-7857},

year = {2014},

date = {2014-01-01},

journal = {Cluster Computing},

pages = {1-20},

publisher = {Springer US},

abstract = {With growing computational capabilities of parallel machines, scientific simulations are being performed at finer spatial and temporal scales, leading to a data explosion. The growing sizes are making it extremely hard to store, manage, disseminate, analyze, and visualize these datasets, especially as neither the memory capacity of parallel machines, memory access speeds, nor disk bandwidths are increasing at the same rate as the computing power. Sampling can be an effective technique to address the above challenges, but it is extremely important to ensure that dataset characteristics are preserved, and the loss of accuracy is within acceptable levels. In this paper, we address the data explosion problems by developing a novel sampling approach, and implementing it in a flexible system that supports server-side sampling and data subsetting.We observe that to allowsubsetting over scientific datasets, data repositories are likely to use an indexing technique. Among these techniques, we see that bitmap indexing can not only effectively support subsetting over scientific datasets, but can also help create samples that preserve both value and spatial distributions over scientific datasets. We have developed algorithms for using bitmap indices to sample datasets. We have also shown how only a small amount of additional metadata stored with bitvectors can help assess loss of accuracy with a particular subsampling level. Some of the other properties of this novel approach include: (1) sampling can be flexibly applied to a subset of the original dataset, which may be specified using a valuebased and/or a dimension-based subsetting predicate, and (2) no data reorganization is needed, once bitmap indices have been generated. We have extensively evaluated our method with different types of datasets and applications, and demonstrated the effectiveness of our approach.},

note = {LA-UR-pending},

keywords = {},

pubstate = {published},

tppubtype = {article}

}